Scikit-Learn also has a general class, MultiOutputRegressor, which can be used to use a single-output regression model and fit one regressor separately to each target. Your code would then look something like this (using k-NN as example): from sklearn.neighbors import KNeighborsRegressor from sklearn.multioutput import MultiOutputRegressor X = np.random.random((10,3)) y = np.random.random((10. In this blog post, I want to focus on the concept of linear regression and mainly on the implementation of it in Python. Linear regression is a statistical model that examines the linear relationship between two (Simple Linear Regression ) or more (Multiple Linear Regression) variables — a dependent variable and independent variable(s.

.RegressorChain class sklearn.multioutput. RegressorChain ( baseestimator, order=None, cv=None, randomstate=None )A multi-label model that arranges regressions into a chain.Each model makes a prediction in the order specified by the chain usingall of the available features provided to the model plus the predictionsof models that are earlier in the chain.Read more in the. Parameters:baseestimator: estimatorThe base estimator from which the classifier chain is built. Order: array-like, shape=noutputs or ‘random’, optionalBy default the order will be determined by the order of columns inthe label matrix Y. Order = 1, 3, 2, 4, 0 means that the first model in the chain will make predictions forcolumn 1 in the Y matrix, the second model will make predictionsfor column 3, etc.If order is ‘random’ a random ordering will be used.

Cv: int, cross-validation generator or an iterable, optional (default=None)Determines whether to use cross validated predictions or truelabels for the results of previous estimators in the chain.If cv is None the true labels are used when fitting. Otherwisepossible inputs for cv are:. integer, to specify the number of folds in a (Stratified)KFold,.,. An iterable yielding (train, test) splits as arrays of indices.randomstate: int, RandomState instance or None, optional (default=None)If int, randomstate is the seed used by the random number generator;If RandomState instance, randomstate is the random number generator;If None, the random number generator is the RandomState instance usedby np.random.The random number generator is used to generate random chain orders.Attributes:estimators: listA list of clones of baseestimator.

Order: listThe order of labels in the classifier chain.

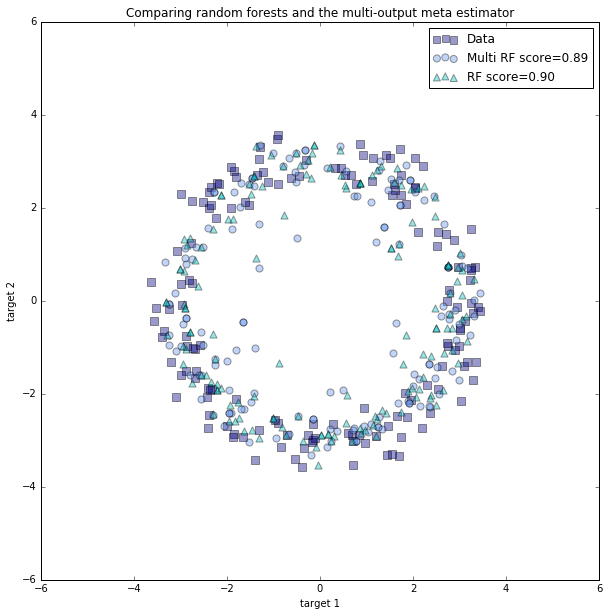

I have run in to a ML problem that requires us to use a multi-dimensional Y. Right now we are training independent models on each dimension of this output, which does not take advantage of additional information from the fact outputs are correlated.I have been reading to learn more about the few ML algorithms which have been truly extended to handle multidimensional outputs. Decision Trees are one of them.Does scikit-learn use 'Multi-target regression trees' in the event fit(X,Y) is given a multidimensional Y, or does it fit a separate tree for each dimension? I spent some time looking at the but didn't figure it out. That does not answer my question. 'Multioutput regression support can be added to any regressor with MultiOutputRegressor.

This strategy consists of fitting one regressor per target. Since each target is represented by exactly one regressor it is possible to gain knowledge about the target by inspecting its corresponding regressor. As MultiOutputRegressor fits one regressor per target it can not take advantage of correlations between targets.' If DecisionTreeRegressor does something along those lines, then that is very different than actually using all dimensions to decide a split.–Sep 5 '17 at 22:23. After more digging, the only difference between a tree given points labeled with a single-dimensional Y versus one given points with multi-dimensional labels is in the Criterion object it uses to decide splits. A Criterion can handle multi-dimensional labels, so the result of fitting a DecisionTreeRegressor will be a single regression tree regardless of the dimension of Y.This implies that, yes, scikit-learn does use true multi-target regression trees, which can leverage correlated outputs to positive effect.